Best AEO Content Scoring Tools For AI Citations

- AEO content scoring tools evaluate whether individual pages are structurally ready for AI citation by measuring answer formatting, semantic coverage, entity depth, and schema signals

- High-quality scoring platforms break down what influenced the score and provide prioritized, implementable edits tied to measurable citation signals

- Cross-engine scoring is essential because ChatGPT, Perplexity, and Google AI surfaces apply different extraction and citation patterns

- Portfolio-level scoring helps teams rank refresh opportunities by citation gap, competitive presence, and traffic impact instead of relying on manual audits

- Human review checkpoints remain critical for validating accuracy, brand voice, and claims before publishing score-driven updates

AEO content scoring tools help you answer a practical question before you invest in a refresh: Will AI search systems cite this page for the prompts that matter in your category?

Think: “Which project management tool is best for remote teams?” or “What’s the best payroll API for vertical SaaS?” These are the types of high-intent prompts AI engines now answer directly.

These tools score pages and drafts against citation-related signals like structure, semantic coverage, entity clarity, and answer formatting. They then highlight the specific changes that move the score, so teams can prioritize fixes and ship improvements faster.

A page can rank #1 on Google and still appear rarely in AI answers. Traditional SEO tools track rankings and backlinks, but they do not show whether ChatGPT, Perplexity, or Google AI Overviews will cite your content or what to change to improve citation likelihood.

This guide breaks down what AEO content scoring tools do, how to evaluate them, and which options fit different team setups. It’s for SEO and content ops leads who want a repeatable way to assess pages and move improvements into production.

AEO content scoring tools at a glance

Use this table to quickly narrow the field based on your team size, scoring depth, and whether you need execution built in.

How we evaluated these tools

We reviewed each platform against criteria that matter to teams building AEO programs at scale:

- Scoring depth: Does the tool provide a clear content score tied to citation likelihood, or does it stop at monitoring?

- Cross-engine coverage: Does scoring reflect differences across ChatGPT, Perplexity, and Google AI surfaces?

- Recommendation quality: Do you get specific, implementable fixes or a generic score?

- Scale and operations: Can teams score and improve pages in bulk, or does it require one-by-one work?

- Integrations: Does the tool connect to GSC/GA4, content systems, or publishing tools?

- Transparency: Does the platform explain what moves the score and why?

- Usability and pricing: How quickly can a team get value, and do meaningful capabilities require enterprise-only plans?

What is an AEO content scoring tool?

An AEO content scoring tool evaluates a page or draft against signals that influence whether AI search systems can extract, trust, and cite it. The output usually includes:

- A quantitative score

- A breakdown of what helped or hurt the score

- A prioritized list of fixes that can increase citation rates

Teams use scoring tools in three main scenarios: before publishing new content, when prioritizing refreshes across a library, and when validating whether edits improved citation readiness.

Scoring gives teams a clear answer to one question: what should we fix next?

What makes a great AEO content scoring tool?

Scoring matters most when it drives action. A strong tool makes it clear which pages to update, which to deprioritize, and what to publish next.

- Predictive scoring over retrospective tracking: Scoring should work on drafts and existing pages, not only after citations rise or fall.

- Cross-engine scoring logic: ChatGPT, Perplexity, and Google AI Overviews don't cite the same way. The tool should reflect that.

- Fixes that move the score: Teams need clear guidance tied to content structure, semantic coverage, entities, and schema.

- Bulk operations: Scoring one page at a time does not match real content portfolios.

- Governance and review: AI can propose edits, but humans still validate accuracy, voice, and claims before publishing.

- Clear feedback loops: Teams should be able to re-score after changes and see which fixes worked.

7 best AEO content scoring tools (reviewed & compared)

Here are the 7 AEO content scoring tools we evaluated:

1. AirOps

Best for: Teams that need content scoring tied to bulk execution and publishing

Most AEO content scoring tools give you a number and a checklist, then stop. Teams still have to decide what to fix first, translate recommendations into real edits, route approvals, and publish updates across dozens or hundreds of URLs.

AirOps connects content scoring inputs directly to governed execution. Teams score and prioritize pages using AI search signals alongside GSC and GA4 context, then run refreshes in bulk with Brand Kits and built-in human review. That keeps scoring tied to shipped updates instead of turning into another backlog.

What AirOps automates in AEO content scoring

Content scoring inputs and page-level diagnosis

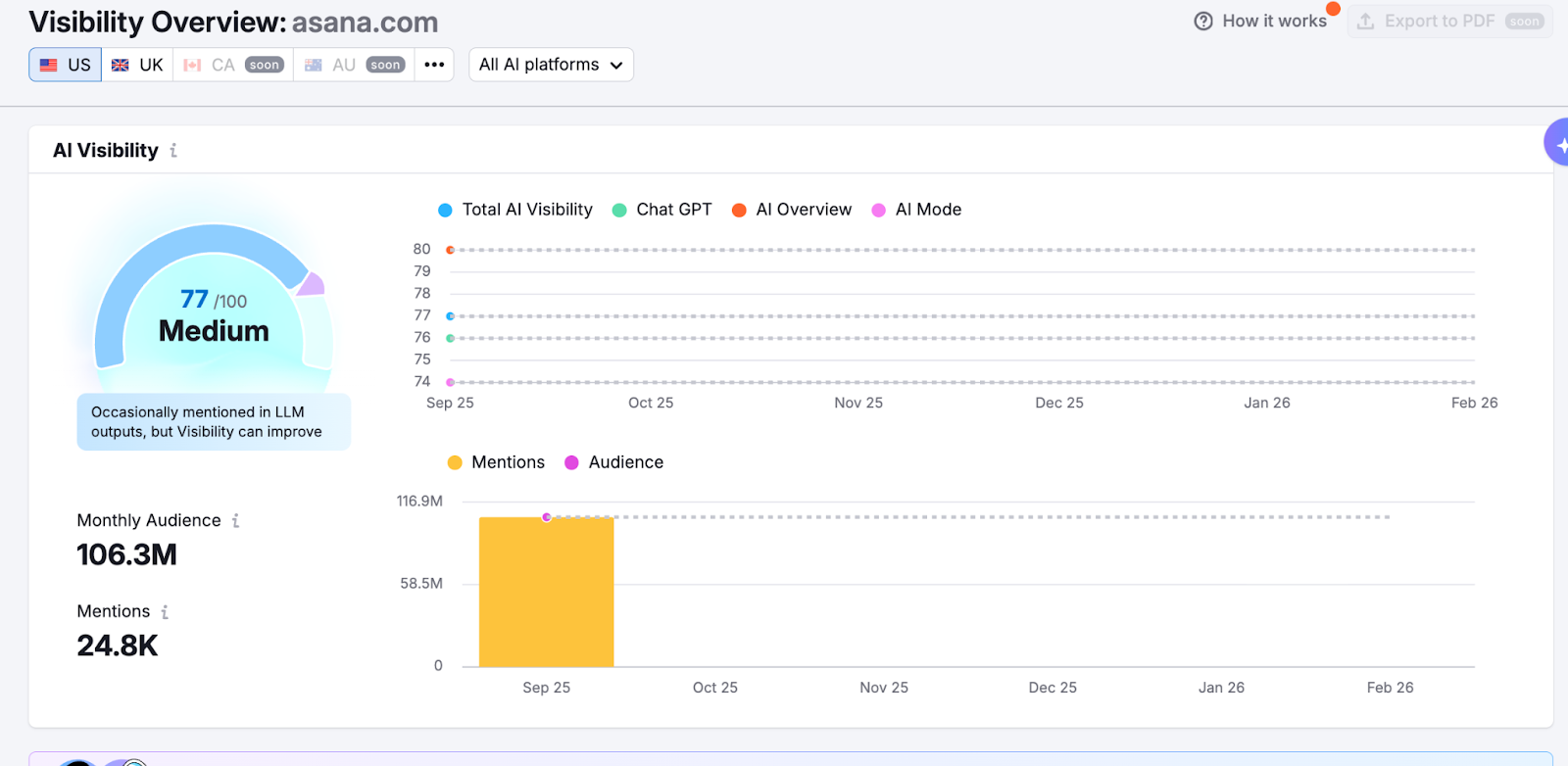

- Scores and diagnoses content using AI search signals like mention rate, share of voice, citation rate, sentiment, and average position across major AI engines

- Brings AI search signals together with Google Search Console and GA4 at the URL level to support scoring and prioritization decisions

- Tracks prompt sets over time so teams can see which pages show up in AI answers, which do not, and where competitors take the citation share

Prioritization and opportunity routing

- Surfaces prioritized actions across content gaps, refresh targets, and offsite opportunities tied to citation patterns

- Categorizes cited URLs by page type and domain type so teams can see what AI engines trust in their category

- Supports filter-based targeting, such as pages losing clicks while gaining citations, so teams can focus refresh effort where it has the strongest business impact

Execution and publishing

- Runs bulk content refresh and creation across many pages with scheduled execution and CMS publishing

- Pulls AI search answers, citations, and mentions into content production steps so writers work from live context

- Routes updates through human review with diff views before publishing

- Centralizes voice, audience rules, and product context through Brand Kits so edits stay consistent at scale

When AirOps fits

AirOps works best for teams that:

- Manage 100+ pages and need scoring to translate into refreshes without adding headcount

- Want one place to connect AI search signals with GSC and GA4 context for prioritization

- Run ongoing refresh programs and need a repeatable system for scoring, fixing, and publishing

- Need governance across multiple brands, regions, or client accounts

- Want to publish updates from the same system that identified the problem

When AirOps does not fit

AirOps may not fit if you:

- Manage fewer than 50 pages and prefer manual scoring and one-off updates

- Need pre-publication simulation as the primary requirement

- Only want single-engine tracking and do not plan to expand coverage

- Prefer point-and-click tools and do not want to configure execution steps

- Need mostly technical SEO remediation rather than content-level citation improvements

Pricing

AirOps pricing stays straightforward:

- Solo: Free tier with tracked prompts and pages, ChatGPT insights, monthly reports, and task limits

- Pro: Adds multi-engine coverage, weekly reports, higher task limits, research integrations, and unlimited seats

- Enterprise: Custom limits, multi-region tracking, advanced collaboration features, and dedicated support

2. Contently

Best for: Enterprise teams that want draft scoring plus human editorial support

Key features

- Scores drafts against 30+ answer-engine signals

- Auto schema injection for FAQ, HowTo, Product, and Organization markup

- Share of voice reporting across multiple AI engines

- Refresh triggers to address recency and drift

- API access for programmatic scoring

Pros

- Deep draft scoring across structure, entities, and schema

- Access to an editorial network for human rewrites

- Strong compliance posture for regulated industries

Cons

- No publicly available pricing

- Many performance claims come from vendor materials

- Limited third-party evaluation of scoring components

Pricing: Enterprise subscription; contact sales.

Who it fits: Large teams that want scoring and editorial support under one vendor.

3. Profound

Best for: Enterprise brands that want ML-based scoring across many AI engines

Key features

- Predictive scoring based on models trained on cited pages

- Coverage across many AI engines

- Multiple visibility and sentiment-related metrics

- Templates based on historically cited patterns

- Traffic attribution support for AI-sourced visits

Pros

- Broad engine coverage

- ML approach ties scoring to observed citation behavior

- Strong positioning in the AEO category

Cons

- Starter tier limits features and scope

- Pricing complexity increases by brand, region, and configuration

- Implementation can still require manual execution outside the tool

Pricing: Tiered; public details vary by plan.

Who it fits: Global brands that prioritize engine coverage and can support an enterprise rollout.

Learn more about how Profound stacks up against AirOps here.

4. Semrush

Best for: Teams already using Semrush that want an AEO scoring layer without switching platforms

Key features

- AI visibility scoring and competitor benchmarking

- Dashboards for mentions and trends across AI platforms

- Guided fixes inside Semrush content tooling

- Features that highlight AI-cited publications for outreach

Pros

- Low friction for existing Semrush users

- Adds AI visibility without rebuilding the whole stack

- Useful for teams starting to formalize AEO reporting

Cons

- Add-on orientation means AEO is not the platform core

- Scoring detail and limits can be hard to compare without a trial

- Teams still need a separate execution system for bulk refresh work

Pricing: AI toolkits often require add-ons plus a base plan.

Who it fits: Hybrid SEO teams that want an on-ramp to AEO scoring inside a familiar interface.

5. Rankability

Best for: Agencies that want real-time NLP scoring and coaching support

Key features

- Real-time scoring using NLP engines

- AI search analyzer for monitoring presence

- AI writing support inside the platform

- Coaching calls and community education

Pros

- Live feedback during content edits

- Coaching helps teams adopt scoring as a habit

- Good fit for agencies training multiple writers

Cons

- Integrations can lag behind other content tools

- Feature depth varies across modules

- Cost can feel high for teams that only need scoring

Pricing: Starts around the mid-market range.

Who it fits: Agencies and consultants who want scoring plus enablement.

6. AthenaHQ

Best for: SMBs that want multi-engine tracking with guided next steps

Key features

- Monitoring across several AI engines

- Action center for suggested improvements

- Keyword-to-prompt generation

- Language and regional testing support

- Publishing integrations for some platforms

Pros

- Multi-engine coverage at a mid-market starting point

- Clear UI for teams new to AI search tracking

- Helpful for validation before large updates

Cons

- Some advanced scoring features sit behind higher tiers

- Credit-based models can complicate cost planning at scale

- Limited public detail on scoring methodology

Pricing: Mid-market self-serve plan plus higher tiers.

Who it fits: Small-to-mid teams that want breadth and guided actions.

Learn how AthenaHQ compares to AirOps here.

7. Otterly.AI

Best for: Small teams that want an affordable entry point for AI visibility monitoring

Key features

- Monitoring across major AI surfaces

- Prompt library for structured tracking

- Competitive benchmarks and alerts

- CSV exports for reporting

Pros

- Low entry price

- Fast setup and useful monitoring cadence

- Reporting works well for lightweight programs

Cons

- Monitoring-first approach

- Does not offer holistic prescriptive scoring across content signals

- Score-driven optimization requires other tools

Pricing: Low-cost entry tier with higher tiers for scale.

Who it fits: Teams that want monitoring before investing in full scoring.

Turn content scoring into shipped improvements

AEO content scoring tools give teams clarity. They show which pages are structurally ready for AI citation, which ones fall short, and what changes move the score. Scoring alone doesn't improve visibility. Progress happens when teams translate those signals into prioritized refreshes and published updates.

For teams already running strong SEO programs, content scoring adds a forward-looking layer. It gives you a structured way to improve citation likelihood across your portfolio and track how those changes influence AI visibility over time.

AirOps connects scoring inputs and AI search signals to governed execution, so teams can prioritize high-impact pages, run bulk refreshes, and publish updates from the same system that surfaced the opportunity.

Book a demo to see how AirOps connects AEO content scoring to bulk refresh execution and AI citation growth.

FAQs

What is the best AEO content scoring tool for SEO teams?

The best tool depends on how your team uses scoring. Profound fits enterprise teams that want broad engine coverage and ML-based scoring. Semrush fits teams that want an AEO layer inside an existing SEO suite. AirOps fits teams that want scoring inputs connected to bulk execution. Contently fits large teams that want detailed draft scoring with human editorial support.

Are free AEO content scoring tools worth it?

Free options help teams validate whether AI visibility matters in their category and test a small prompt set. Most free tiers limit engine coverage, scoring depth, or scale. Teams that want portfolio-wide scoring and repeatable refresh cycles typically move to paid plans.

How do AEO content scoring tools work?

These tools score pages against signals that influence AI citation probability, such as structure, semantic coverage, schema completeness, answer formatting, and entity clarity. Some tools use ML models trained on cited pages. Others use rule-based scoring plus NLP analysis. Strong tools pair the score with specific recommendations that teams can implement.

Can AI automate AEO content scoring and optimization?

AI can automate scoring and generate recommended edits quickly. Humans still validate accuracy, voice, and claims before publishing. Teams get better results when the tool supports review checkpoints and makes approved updates easy to ship.

Do I need AEO content scoring tools if I already use Semrush or Ahrefs?

Yes. Traditional SEO platforms focus on rankings, backlinks, and technical audits. AEO content scoring tools focus on whether AI search systems can extract and cite your content for key prompts. Semrush offers AI add-ons, but teams often still need dedicated scoring logic or execution support to run improvements across a large library.

Win AI Search.

Increase brand visibility across AI search and Google with the only platform taking you from insights to action.

Get the latest on AI content & marketing

Get the latest in growth and AI workflows delivered to your inbox each week